Master RAG Systems: Complete Guide to Become an AI Engineer in 2026 covers five core components — retrieval augmented generation, vector databases, hybrid search, agentic reasoning, and context engineering.

These components reduce hallucinations and improve accuracy in production AI applications.This is the complete blueprint for developers and students building production-ready AI systems.

Most RAG tutorials show you the basics and stop there. But if you’re a student or developer trying to build something that actually works in production, the basics aren’t enough.

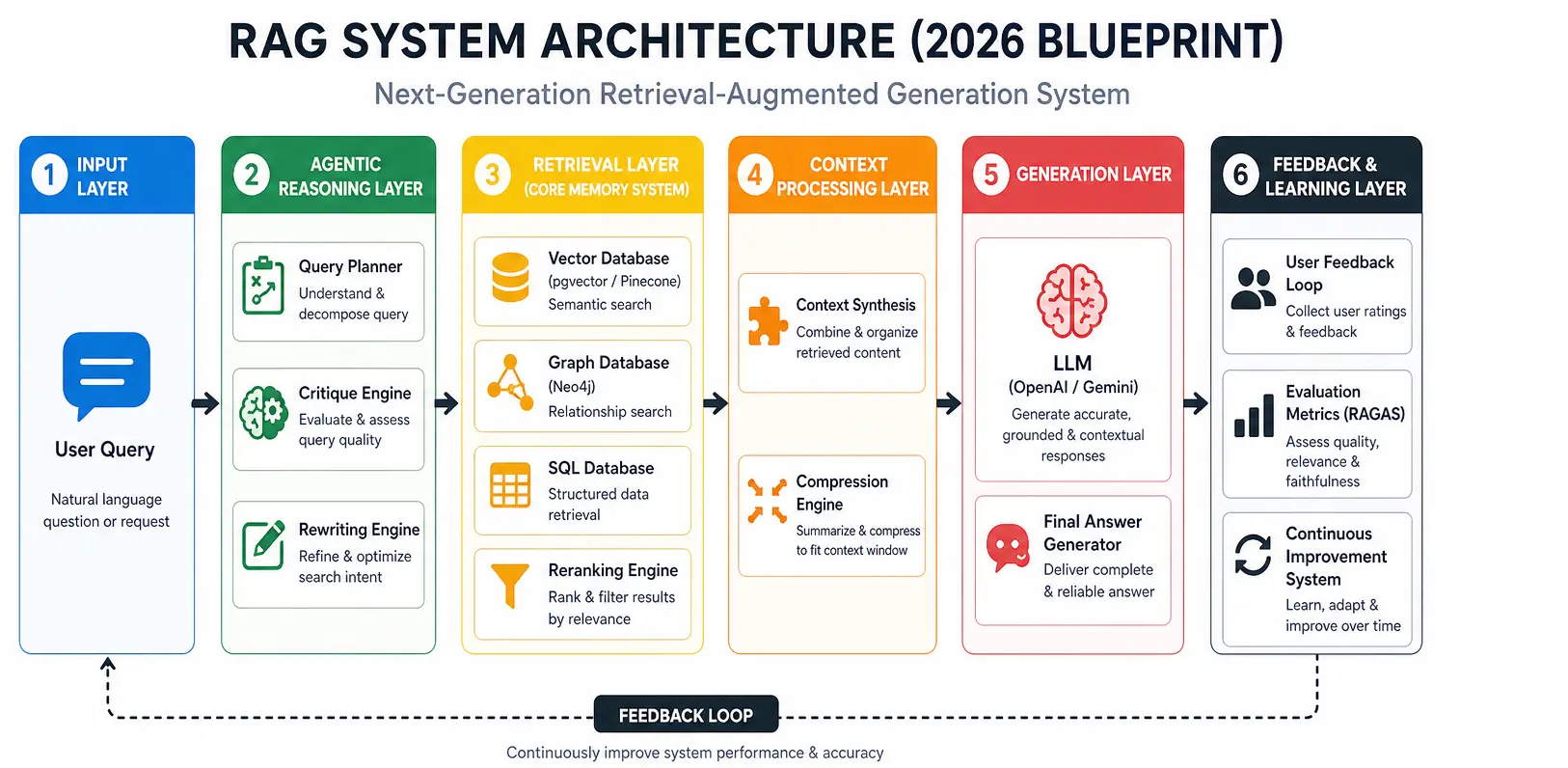

In 2026, a real production RAG system combines:

- Retrieval augmented generation to ground your AI responses in real data

- Vector databases like pgvector and Pinecone to store and search meaning, not just keywords

- Hybrid search combining semantic and keyword retrieval for better accuracy

- Agentic reasoning to make your pipeline smart enough to plan before it retrieves

- Context engineering to filter what actually goes into your LLM prompt

This is not a beginner hello-world tutorial. This is the complete RAG system blueprint for developers who want to build production-ready AI systems — and for students who want to think and work like a real AI engineer in 2026.

If you’ve ever asked “why does my RAG system give wrong answers?“ or “how do I move from a notebook prototype to a real deployment?” — this guide answers both.

👉 What Makes RAG Systems Essential in 2026

RAG systems have become the foundation of modern AI applications because they allow models to access external knowledge instead of relying only on training data. In 2026, production RAG systems architecture is widely adopted in enterprises to reduce hallucinations, improve factual accuracy, and connect LLMs with live data sources such as databases, documents, and APIs.

The shift is from static intelligence → dynamic, retrieval-powered intelligence systems, where vector databases for AI systems play a central role in storing and retrieving contextual knowledge efficiently.

This is the foundation of modern RAG architecture used in production AI systems.

For a complete beginner-friendly breakdown, learn RAG systems from basics

Why RAG systems matter today

- They reduce AI hallucinations by grounding responses in real data using retrieval augmented generation systems in production

- They allow LLMs to stay updated without retraining through real-time AI retrieval systems

- They enable enterprise systems to use private and real-time knowledge with vector database-based AI architecture

- They improve accuracy in domain-specific applications like finance, healthcare, and legal using Python RAG system implementation patterns

- They make AI systems production-ready instead of experimental through scalable RAG systems 2026 blueprint design

👉 How Agentic Reasoning Changes AI Retrieval Systems

Agentic reasoning adds decision-making capability into modern AI retrieval systems. Instead of directly fetching documents, the system first builds a strategy for how information should be searched and validated.

In production-grade AI retrieval architectures, this makes RAG systems more reliable by introducing planning, reasoning, and verification before generating responses.

Key components of agent-driven retrieval

- Intent Understanding Module – interprets user queries for smarter search execution in intelligent AI retrieval pipelines

- Query Optimization Layer – rewrites inputs for better semantic matching in embedding-based search systems

- Context Validation Engine – checks and filters retrieved results for accuracy in enterprise RAG system workflows

Why this improves modern RAG systems

This approach upgrades traditional pipelines into adaptive reasoning systems that can adjust their retrieval strategy dynamically.

In advanced AI system design for retrieval augmented generation, this leads to:

- better context selection

- reduced irrelevant retrievals

- improved response grounding

- higher accuracy in enterprise use cases

👉 Why Vector Databases Power Modern AI Systems

Vector databases are the core infrastructure behind modern AI retrieval systems. They store embeddings that represent semantic meaning rather than raw text, enabling machines to understand similarity based on context instead of keywords.

This is what allows AI systems to move beyond keyword matching into true semantic understanding. If you want to go deeper into how embeddings work, you can explore vector embeddings in AI systems.

In production RAG systems architecture in 2026, vector databases act as the memory layer that connects large language models with external knowledge sources.

One of the most widely used implementations is

pgvector vector database for AI systems, which integrates directly with PostgreSQL for scalable AI memory

What vector databases enable in real systems

- Store high-dimensional embeddings at scale for AI retrieval pipelines

- Enable ultra-fast similarity search for semantic search in RAG systems

- Support contextual retrieval for retrieval augmented generation systems in production

- Allow real-time querying of knowledge for enterprise AI architecture systems

Why they matter in modern AI design

Unlike traditional databases that rely on exact matches, vector databases allow AI systems to retrieve information based on meaning.

In 2026 production deployments, this distinction determines whether your RAG system answers correctly or confidently returns the wrong result.

Popular vector databases for AI systems

These tools are widely used in Python RAG system implementation for production AI workflows. Which Vector Databases Power Production RAG Pipelines in 2026?

This is the memory layer of modern AI systems, powering context-aware intelligence at scale.

🤖 How Hybrid Search Improves Accuracy in RAG Systems

Modern RAG systems do not rely on vector search alone. Instead, they use hybrid retrieval strategies in production RAG systems architecture in 2026 to improve both relevance and accuracy.

This approach combines multiple retrieval techniques to ensure the AI system retrieves the most contextually useful information.

Core components of hybrid search

- Semantic search (vector similarity) – understands meaning using embeddings in vector database based for AI systems

- Keyword search (BM25) – captures exact term matching for precise retrieval in AI retrieval pipelines

- Reranking models – reorder results based on relevance scoring in retrieval augmented generation systems in production

In real-world implementations, developers often build full retrieval pipelines using semantic search systems with Python, combining embeddings, filtering, and ranking logic.

Why hybrid search improves AI accuracy

This layered approach ensures that no single retrieval method limits system performance.

In RAG infrastructure for production, hybrid search leads to:

- higher precision in result selection

- better recall across diverse queries

- reduced irrelevant or noisy context

- improved grounding for LLM responses

Role of reranking in RAG systems

After initial retrieval, reranking models evaluate and reorder results to prioritize the most relevant context for the language model.

This step is critical in enterprise AI architecture systems, where accuracy directly impacts decision-making and user trust.

This combination makes retrieval in modern RAG systems significantly more reliable than traditional single-method search.

👉 Context Engineering in Production RAG Pipelines

Context engineering ensures that only the most relevant and high-quality information is passed to the LLM in production RAG systems architecture in 2026. In simple terms, it acts like a filter that helps the model “focus” on what actually matters instead of being overwhelmed by raw data.

What context engineering includes

- Chunk optimization – breaking content into meaningful sections so the system can understand it better in vector databases for AI systems

- Context compression – reducing unnecessary information while keeping the core meaning intact in AI retrieval pipelines for enterprise systems

- Metadata filtering – selecting only the most relevant documents based on tags, source, or importance

Without this layer, LLMs often struggle with too much input, leading to confusion, slower responses, and lower-quality answers.

👉 How LLMs Generate Grounded Responses in RAG Systems

Once relevant context is retrieved and cleaned, the LLM generates responses strictly based on that information.

From a human perspective, this is where the system stops “guessing” and starts “reasoning with evidence.”

This is what makes modern retrieval augmented generation systems in production far more reliable than traditional AI models.

Key outcomes of grounded generation

- reduced hallucination in real-world AI responses

- stronger factual grounding from trusted sources

- improved accuracy in domain-specific use cases

- more dependable behavior in enterprise systems

The model is no longer inventing answers — it is working like a reasoning layer over real data.

👉 Feedback Loops and Continuous Learning in RAG Systems

Production RAG systems improve over time through continuous feedback. Think of it like a system that “learns from its mistakes” in real usage.

In scalable RAG systems 2026 blueprint architectures, this is what keeps performance improving after deployment.

Key feedback mechanisms

- user feedback signals from real interactions

- retrieval quality scoring from system logs

- RAGAS evaluation metrics for response quality

- monitoring pipelines to track system behavior

Over time, the system becomes smarter by learning what works and what doesn’t.

🐍 Python Implementation Architecture for Production RAG Systems

A production RAG system architecture built in Python usually feels like multiple small systems working together as one.

A complete hands-on Python RAG system implementation is explained in build RAG system using Python and Pinecone, which walks through the full pipeline step by step.

Core components

- FastAPI backend for handling user requests

- embedding pipeline (OpenAI or local models)

- vector databases for AI systems integration (pgvector, Pinecone, Milvus)

- retrieval service layer for fetching relevant data

- LLM orchestration layer for response generation

- evaluation and monitoring pipeline for quality control

Python simply acts as the “connector layer” that brings all AI components together in real systems.

👉 Common RAG System Design Mistakes to Avoid in Production

Even well-designed RAG systems can fail if a few critical architectural decisions are missed during implementation. Find out 7 Critical RAG Production Pitfalls (Python Fixes)

Frequent mistakes

- relying only on vector search without hybrid retrieval in production RAG systems architecture in 2026

- missing reranking layer in the retrieval pipeline

- poor chunking strategy that breaks semantic context in vector database for AI systems

- no evaluation or testing framework for response quality

- missing feedback loop after deployment in scalable RAG systems 2026 blueprint architectures

- sending too much irrelevant context to the LLM causing token overload

Why these mistakes matter

In practice, these issues often reduce system accuracy more than model selection itself. A detailed breakdown of these issues is available in RAG system production mistakes and fixes.

A well-chosen LLM cannot compensate for poor retrieval design, weak context engineering, or missing evaluation loops.

Final system insight

If you step back, a production RAG system is not just an AI pipeline — it is a carefully balanced architecture that decides what to retrieve, what to ignore, and how to reason over knowledge.

This balance is what defines modern retrieval augmented generation systems in production, especially in enterprise-scale AI applications.

That’s what makes RAG systems powerful in real-world production environments.

Final Production Blueprint Summary for RAG Systems 2026

A production-ready RAG system in 2026 is not a single feature or tool — it is a multi-layer AI architecture designed for reliability, scalability, and grounded intelligence.

Core components of a production RAG system

- Agentic reasoning layer for planning and decision-making in production RAG systems architecture in 2026

- Hybrid retrieval system combining semantic and keyword search

- Vector database memory layer for storing embeddings in vector database-based AI systems

- Context engineering pipeline for filtering and optimizing retrieved data

- LLM generation layer for grounded response creation

- Continuous feedback loop for system improvement and evaluation

Final insight

This is no longer a feature — it is a full AI system architecture paradigm shift, where retrieval, reasoning, and generation work together as one unified system.

In modern retrieval augmented generation systems in production, success depends not on a single model, but on how well these layers are designed, connected, and optimized.

Frequently Asked Questions (FAQ)

What is a RAG system in AI?

A RAG (Retrieval-Augmented Generation) system combines retrieval and generation to produce grounded and accurate AI responses using external data sources such as documents, databases, and APIs. In modern production RAG systems architecture in 2026, it is a core design pattern for building reliable AI applications.

Why are vector databases important in RAG?

Vector databases store embeddings and enable semantic similarity search, allowing AI systems to retrieve information based on meaning rather than exact keywords. They form the memory layer in vector database-based AI systems used in production environments.

What is agentic RAG?

Agentic RAG is an advanced form of retrieval system that includes planning, reasoning, and decision-making layers before fetching data. This improves retrieval quality in intelligent AI retrieval pipelines for enterprise systems.

Is RAG better than fine-tuning?

Yes, in most production use cases. RAG is typically more cost-effective, faster to update, and easier to maintain compared to fine-tuning, especially in scalable RAG systems 2026 blueprint architectures where data changes frequently.

What tools are used in RAG systems?

Common tools and frameworks include:

- pgvector

- Pinecone

- LangChain

- LlamaIndex

- OpenAI APIs

These are widely used in Python-based RAG system implementations for production AI applications.

AI Code With Haritha – AI, RAG Systems, and Vector Database Engineering Tutorials