Vector Databases & Semantic Search FAQ

Q: What exactly is a vector database, and why is it essential for AI?

A vector database is a specialized storage engine that saves data as high-dimensional mathematical representations called embeddings, rather than plain text or rows.

It serves as the “long-term memory” for AI applications. Unlike traditional SQL databases that rely on exact keyword matches, vector databases use similarity search to find concepts that are mathematically related to a user’s query, making them the backbone of fast, context-aware RAG systems.

Q: How does “meaning” get stored in a database?

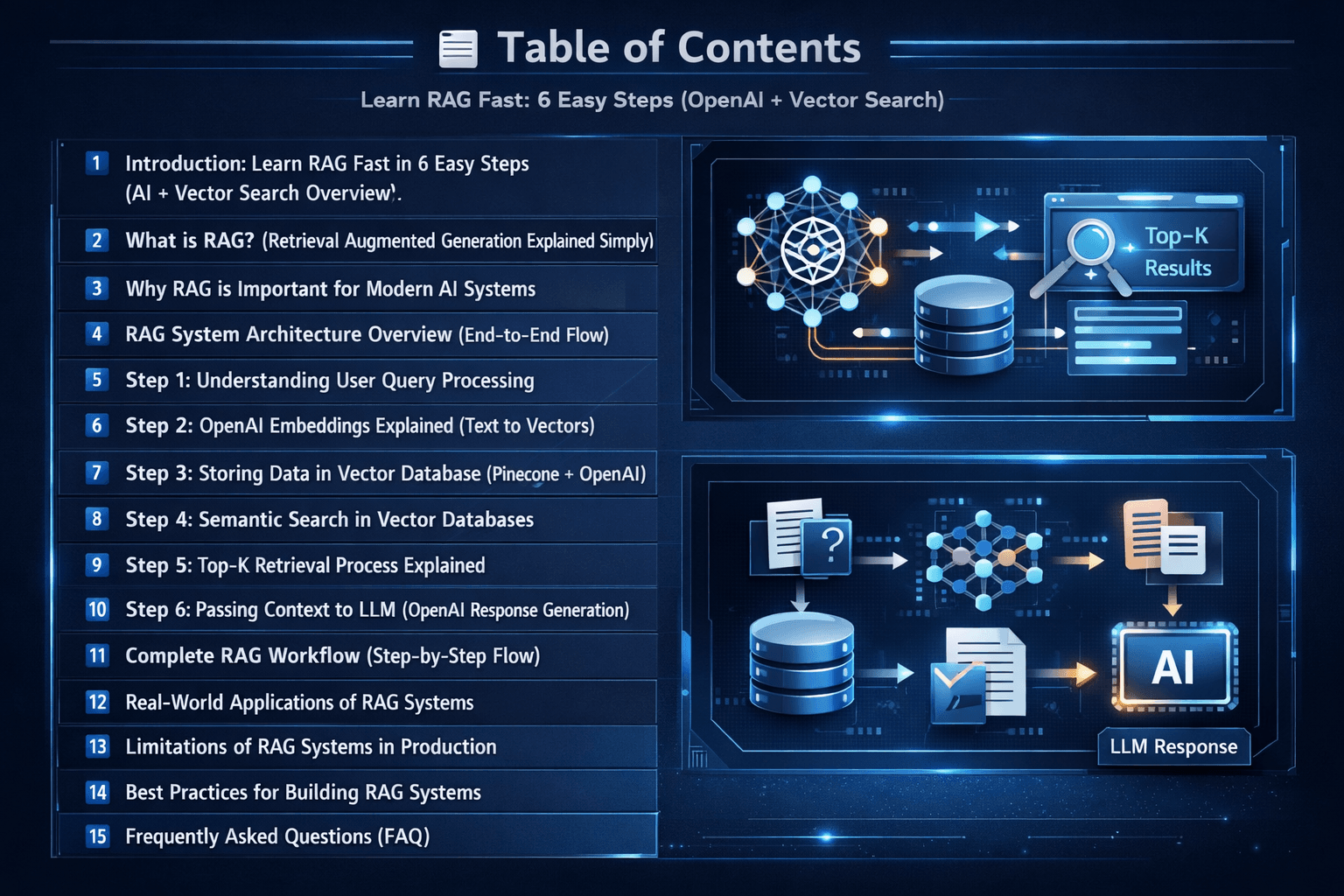

Meaning is captured through “Vector Embeddings,” which are long strings of numbers generated by machine learning models to represent the semantic essence of an object.

When you “embed” a piece of text or an image, the model places it in a high-dimensional space. Objects with similar meanings are placed closer together mathematically, allowing the database to retrieve relevant information even if the exact words don’t match.

Q: What is the difference between Semantic Search and traditional SQL search?

SQL search looks for specific character matches (e.g., “blue car”), whereas Semantic Search looks for the intent and concept (e.g., “azure vehicle”).

Traditional search fails if there isn’t a literal string match. Semantic search leverages Approximate Nearest Neighbor (ANN) algorithms to provide lightning-fast results based on the relationship between ideas, which is critical for handling unstructured data like PDFs.

Q: Which vector database should I choose for a production RAG pipeline?

The choice depends on your scale; pgvector is ideal for SQL-integrated stacks, while Pinecone and Qdrant are preferred for managed serverless needs.

For developers deep in the PostgreSQL ecosystem, pgvector 0.7+ is an excellent starting point. However, for enterprise-grade AI requiring massive scaling and sub-10ms latency, dedicated vector stores often provide superior performance benchmarks.

Q: How do vector databases help in reducing LLM hallucinations?

They provide “Grounding” by supplying the LLM with factual, retrieved context from your internal data before it generates a response.

Instead of letting an LLM guess, a vector database finds the exact relevant sections of your private documents. This context is fed into the prompt, forcing the AI to answer based on your specific facts rather than its training data.

Q: Is it difficult to scale vector indexes as my data grows?

Scaling is manageable using best practices like HNSW indexing algorithms, index sharding, and proper memory management.

As your data grows to millions of vectors, memory overhead becomes a factor. Production deployment requires monitoring for “semantic drift” and optimizing index configurations to ensure retrieval remains both fast and secure.